Ansible and vRealize Automation (vRA) are both popular DevOps tools for infrastructure automation and provisioning. However, the two tools have different strengths and use cases, and choosing the right one for your organization can be a challenge. In this blog post, we’ll explore the key differences between vRA and Ansible and why you might choose vRA over Ansible.

- Complexity of Deployment

Ansible is a simple, agentless tool that is easy to install and configure. However, as the complexity of your deployment increases, the simplicity of Ansible can quickly become a hindrance. vRA, on the other hand, is a complex tool that is designed to handle complex deployments, making it an ideal choice for large, complex environments.

- Integration with Other Tools

vRA integrates with a wide range of tools, including vSphere, NSX, and vRealize Operations, allowing you to manage and automate the entire software-defined data center. Ansible, on the other hand, does not have this level of integration, which can lead to a more fragmented environment.

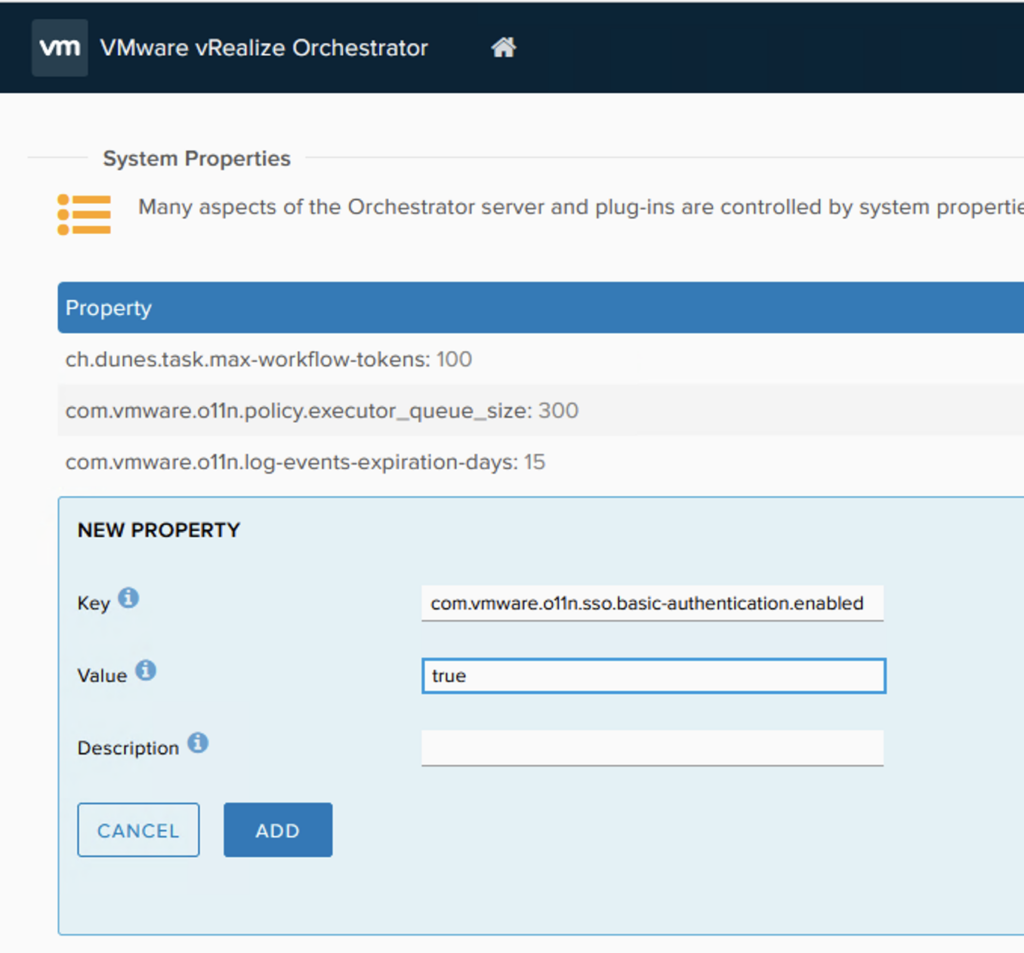

- User Interfaces

vRA has a rich, web-based interface that allows you to easily manage and automate your infrastructure. The interface is intuitive and easy to use, even for those with limited technical skills. Ansible, on the other hand, is a command-line tool, making it more difficult for non-technical users to use.

- Scalability

vRA is designed to scale as your organization grows, allowing you to manage an increasing number of servers and applications. Ansible, while scalable, is not designed to handle the same level of scale as vRA, making it a less ideal choice for large enterprises.

- Cost

Ansible is open source, which means that it is free to use. vRA, on the other hand, is a commercial product that requires a license. While the cost of vRA may be a concern, the additional features and capabilities offered by vRA can make it a better choice for organizations that need a more robust automation solution.

In conclusion, while both Ansible and vRealize Automation have their strengths, vRA is a more powerful and scalable solution that is ideal for large, complex environments. The integration with other tools, rich web-based interface, and scalability make vRA a better choice for organizations that need a robust infrastructure automation solution.